Big Ass Fans is the best-selling big fan manufacturer in the world, delivering comfort to spaces where comfort seems impossible. BAF had a problem: how to reliably plan production to meet demand. BAF was experiencing a gap between bookings forecasts vs. shipments, and this was impacting revenue and customer satisfaction. BAF turned to Smart Software for help.

BAF’s Supply Chain Manager took the lead to flesh out their planning needs and methodically address them. In his words, “it came down to fundamentals. Our planning process needed to be data driven, collaborative, and continually improved by assessing and enhancing our monthly forecasting process.”

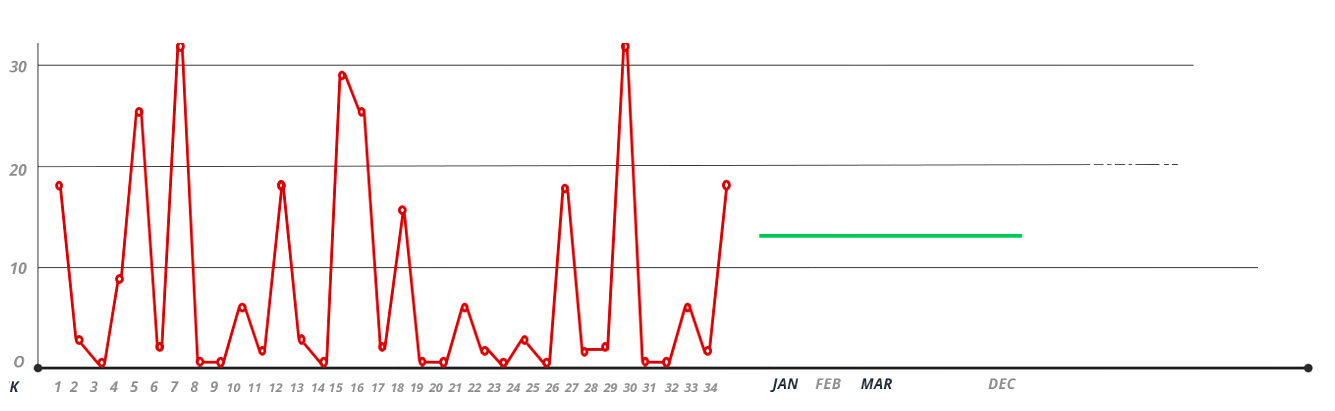

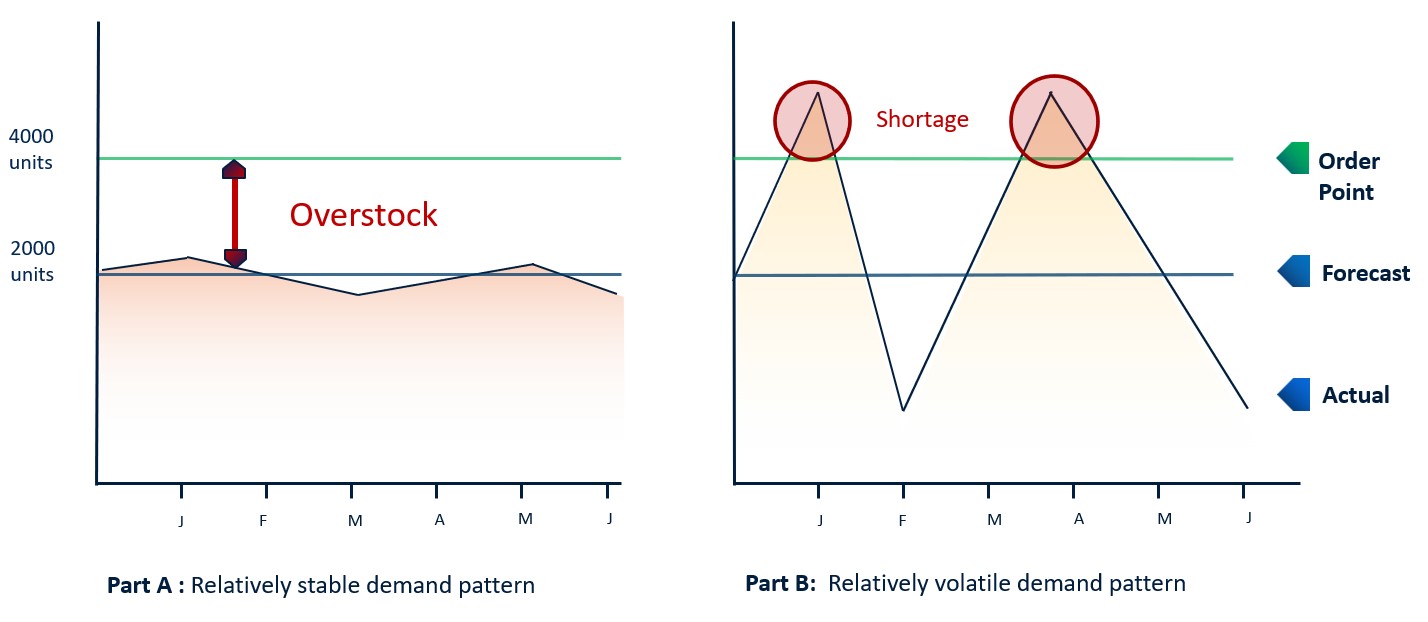

A big part of this was bringing the disparate planning processes together. Product managers produce monthly demand forecasts, while the operations team forecasts shipments and associated material requirements. BAF needed a tighter, data-driven process that combines advanced analytics with team collaboration. This would need to address seasonality, a huge factor driving demand fluctuations, incorporate input from international as well as US markets, and capture the impact of market promotions.

BAF’s Customer Service Director and S&OP Team Lead explained what this means. “Now we have one unified, global process, one shared business view that provides the framework for all of our cross-business planning.” She likens it to having one source for the truth. “Every month the entire team sees updated orders and shipments and can compare forecast against actual performance. Individual managers view business through their required business lens – by product line or service, region, international geography, channel, customer, you name it.”

“This is enabling technology that makes us better,” she continued. “Smart IP&O is, among other things, the vehicle for our monthly SIOP process. We review our own business segments then convene as a group, consider results to date, the impact of promotions, events and seasonality, and agree on our consensus plan going forward. This is an invaluable process, enabling manufacturing to stay ahead of demand and deliver what our customers need, when they need it.”

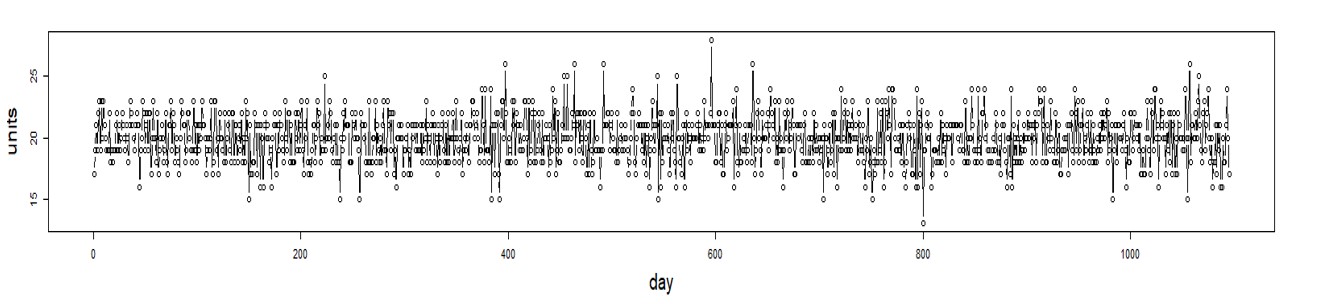

“Smart Inventory Planning & Optimization is the critical tool we use to manage our forecasts across a large and dynamic set of Products/Parts, multi-national sites, and complex supply chains,” added the Supply Chain Manager. “The ability of the software to provide a statistical forecast as baseline, allow adjustments by various subject matter experts, each recorded as ‘snapshots’ for consensus building and later use in accuracy/improvement efforts, then ultimately feed the forecast data directly into our Material Requirements Planning software is central to our S&OP process.”

BAF has refined its monthly Sales, Inventory and Operations Planning process utilizing Smart Demand Planner, Smart’s collaborative forecasting and demand planning application. Smart’s API based bi-directional integration with BAF’s Epicor Kinetic ERP automatically captures all order and shipment data that in turn drives the creation of monthly statistical forecasts. Through its monthly SIOP process, BAF product managers produce initial forecasts, share these with sales managers who can suggest adjustments, and bring together consensus plans across 25 product lines for monthly review, adjustment, and presentation to the executive team as the company’s rolling 12-month plan.

The team credits Smart Demand Planner with providing a thorough and accurate forecast of future demand that is central to BAF’s monthly SIOP process. BAF extended Smart’s utilization to its international offices, where subject matter experts manage their own forecasts. “Within Smart they can manage both demand forecasts that key on their shipments to local end users and supply forecasts based on their purchase history as key customers to BAF-US. This significantly enhances our global demand view and has improved forecast accuracy.”

About Smart Software:

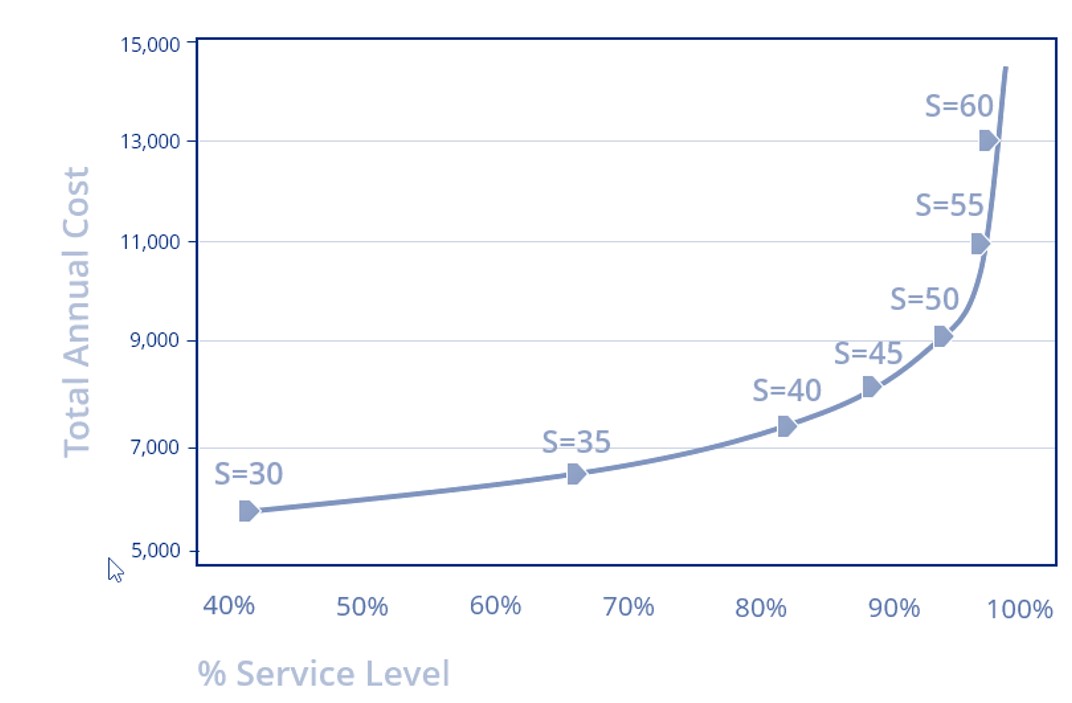

Founded in 1981, Smart Software, Inc. is a leader in providing businesses with enterprise-wide demand forecasting, planning, and inventory optimization solutions. Smart Software’s demand forecasting and inventory optimization solutions have helped thousands of users worldwide, including customers such as Disney, Arizona Public Service, and Ameren. Smart’s Inventory Planning & Optimization Platform, Smart IP&O, provides demand planners the tools to handle sales seasonality, promotions, new and aging products, multi-dimensional hierarchies, and intermittently demanded service parts and capital goods items. It also provides inventory managers with accurate estimates of the optimal inventory and safety stock required to meet future orders and achieve desired service levels. Smart Software is headquartered in Belmont, Massachusetts. Learn more at www.smartcorp.com.

About Big Ass Fans

At Big Ass Fans, we are driven by our mission to create safer, healthier, more productive environments worldwide. What started as a big idea in airflow became a revolution and is now best practice for designers, managers, and business owners across every imaginable industry and application. Today, our products are proudly spinning and serving more than 80 percent of the Fortune 500 in 175 countries. From factories to homes and everywhere in between, Big Ass Fans delivers comfort, style, and energy savings to make life more enjoyable. With more than 235 awards, 350 patents, an experiment on the International Space Station and the only HVLS Research & Design lab in the world, we go big every day.